Clinical diagnosis is traditionally portrayed as the outcome of rational evidence accumulation and systematic analytical reasoning. Yet the phenomenology of clinical decision-making reveals a more complex reality: a cognitive transition in which physicians cease actively generating alternative hypotheses and begin treating a working explanation as sufficiently established for action.

This paper introduces the Belief Stabilization Threshold (BST), defined as the cognitive point in diagnostic reasoning at which a provisional hypothesis becomes psychologically stabilized and begins to function as an operative belief guiding clinical decisions. Drawing on research in diagnostic error science, cognitive psychology, Bayesian reasoning, and philosophy of medicine, the BST framework reconceptualizes premature diagnostic closure not merely as a discrete cognitive bias but as a structural feature of belief formation within the clinician’s reasoning architecture.

The model proposes structured skepticism as a meta-regulatory mechanism capable of reopening hypothesis space and recalibrating probabilistic reasoning. By conceptualizing diagnosis as stabilized belief under uncertainty, the BST framework offers a new theoretical lens for understanding diagnostic commitment, clinical certainty, and the epistemology of medical reasoning.

1. Introduction

Clinical medicine operates in a landscape characterized by pervasive uncertainty. Physicians must make diagnostic decisions despite incomplete information, evolving disease trajectories, and imperfect diagnostic tools. Although modern medicine emphasizes evidence-based reasoning and probabilistic thinking, the lived experience of clinical practice reveals that physicians frequently commit to diagnostic explanations earlier than a purely analytical model would predict—and that this tendency, while often adaptive, carries significant epistemic risk.

Traditional models of diagnostic reasoning depict a sequential process in which clinicians generate a differential diagnosis, collect additional evidence through history, physical examination, and investigations, and gradually narrow the range of possibilities until a single explanation emerges as most plausible. This idealized model treats diagnostic certainty as the natural terminus of thorough evidence accumulation. Yet empirical research on diagnostic error challenges this picture. Errors frequently arise even when sufficient information is available and experienced clinicians are involved—which suggests that the failure lies not in the availability of evidence but in the architecture of the reasoning process itself.

This observation points toward a deeper cognitive architecture governing how diagnostic beliefs emerge, stabilize, and subsequently guide clinical action. Physicians rarely maintain an indefinitely open diagnostic space. Rather, reasoning tends to converge on a particular explanatory interpretation that becomes the dominant framework through which all subsequent evidence is interpreted. Once this convergence occurs, the clinician’s epistemic orientation shifts from inquiry to confirmation—and the diagnostic hypothesis effectively becomes diagnostic fact.

The Belief Stabilization Threshold is introduced to describe and analyze the cognitive moment at which this transition occurs. The BST represents the point at which a diagnostic hypothesis becomes sufficiently convincing—not necessarily sufficiently supported—that clinicians begin treating it as the operative explanation for the patient’s condition. Understanding what determines this threshold, what factors accelerate or decelerate it, and how clinicians can regulate it represents a critical frontier in the science of diagnostic reasoning.

2. Diagnosis as Stabilized Belief

From an epistemological perspective, diagnosis should not be understood simply as the discovery of disease—as the uncovering of an objective pathological reality that exists independently of the knowing clinician. Rather, diagnosis is more accurately characterized as the stabilization of belief under conditions of persistent uncertainty. Physicians interpret clinical data probabilistically and must ultimately determine when a hypothesis has become credible enough to justify clinical action, knowing that the certainty required to eliminate all reasonable doubt is rarely, if ever, available.

Symptoms, laboratory findings, and imaging results rarely provide definitive answers in isolation. Instead, each piece of evidence modifies the perceived likelihood of competing explanatory hypotheses, shifting the probability distribution across the diagnostic space. Clinical practice therefore involves a continuous judgment about when uncertainty has been reduced to a level compatible with responsible action—the moment at which the diagnostic hypothesis becomes an action-guiding belief.

Crucially, the stabilization of belief does not imply that certainty has been achieved. It reflects, rather, the practical necessity of acting despite the persistent incompleteness of knowledge. This is not an epistemological failure; it is an inescapable feature of clinical medicine. The physician who waits for certainty before acting has already made a consequential decision—often a more dangerous one than the physician who acts on well-reasoned probability.

Recognizing diagnosis as a stabilized belief, however, also highlights a fundamental epistemological tension within medicine. Physicians must act decisively while acknowledging that their knowledge remains provisional, subject to revision as new evidence emerges. The ethical and intellectual challenge is to hold these two imperatives—the necessity of action and the humility of uncertainty—in productive tension, rather than allowing the former to extinguish the latter.

3. The Belief Stabilization Threshold

The Belief Stabilization Threshold represents the cognitive point in diagnostic reasoning at which a provisional hypothesis becomes psychologically stabilized and begins guiding clinical decisions as though it were established fact. It is not a consciously chosen moment but a largely implicit transition—a shift in the internal orientation of reasoning from active exploration to operative commitment.

Prior to crossing the BST, clinicians maintain what might be called an exploratory epistemic stance: they actively generate and evaluate alternative explanations, maintain a broad differential diagnosis, and treat each new piece of evidence as potentially discriminating. After the threshold is crossed, the reasoning orientation shifts. The dominant hypothesis no longer competes for explanatory primacy; it occupies that position as a given, and subsequent reasoning is largely organized around its elaboration and confirmation.

Several cognitive characteristics accompany this transition and mark the BST as a recognizable moment in the clinical reasoning process:

• Active hypothesis generation declines: alternative explanations lose salience and recede from working memory.

• Evidence interpretation becomes predominantly confirmatory: new data is processed through the lens of the established hypothesis.

• The clinical narrative acquires internal coherence: disparate observations are woven into a unified explanatory story that feels complete.

• Clinicians become prepared to initiate therapeutic interventions based on the working diagnosis.

It bears emphasis that crossing the BST does not imply diagnostic certainty. Rather, it marks the moment at which subjective confidence—however calibrated or miscalibrated—becomes sufficient for clinical action. The BST is therefore not a threshold of knowledge but a threshold of commitment.

4. BST and Diagnostic Error

The most significant clinical consequence of the belief stabilization threshold lies in its potential to precipitate diagnostic error when crossed prematurely. Premature BST crossing occurs when diagnostic commitment is reached before the evidence has adequately discriminated among competing hypotheses—when the subjective experience of sufficient confidence outpaces the objective weight of available information.

Once a diagnostic belief has stabilized, a cascade of self-reinforcing cognitive processes begins. Evidence supporting the dominant hypothesis receives disproportionate weight and attention. Contradictory or anomalous information may be discounted, reinterpreted within the existing framework, or simply not registered as relevant. This selective engagement with evidence is not a conscious decision; it is a structural consequence of having adopted a particular explanatory commitment.

This mechanism helps explain one of the most vexing phenomena in diagnostic error research: why errors frequently persist and propagate even when new and potentially corrective information is available. The clinician’s interpretive framework has already stabilized, and that framework actively shapes how subsequent evidence is processed. The diagnostic narrative becomes, in effect, self-sealing.

The implications extend beyond individual errors. Because clinical decisions are often layered—each building upon prior diagnostic commitments—premature BST crossing can initiate an error cascade. A misdiagnosis that goes unchallenged shapes the selection of investigations, the choice of treatment, the framing of consultations, and the documentation that future clinicians will inherit. In this way, a single moment of premature stabilization can ramify across an entire clinical trajectory.

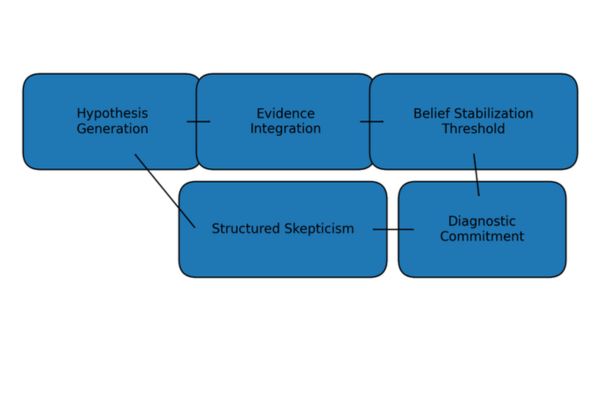

Figure 1. Conceptual Model of the Belief Stabilization Threshold

The trajectory of diagnostic hypothesis strength over time, showing the BST crossing point and the transition from exploratory to confirmatory reasoning.

5. The BST and the Pauker–Kassirer Threshold Model

The Belief Stabilization Threshold can be productively situated within the broader tradition of clinical decision theory—particularly the classical threshold model developed by Stephen Pauker and Jerome Kassirer, which remains a foundational framework in the epistemology of clinical decision-making.

5.1 The Pauker–Kassirer Framework

The Pauker–Kassirer model describes two probabilistic decision thresholds that define the rational boundaries of clinical action. The testing threshold represents the probability below which further diagnostic investigation is unnecessary because the pre-test likelihood of disease is too low to justify the costs and risks of testing. The treatment threshold represents the probability above which treatment should be initiated because the likelihood of disease is sufficiently high to justify therapeutic action. Between these two thresholds lies the zone of diagnostic uncertainty in which further investigation is warranted.

These thresholds are derived from expected utility theory: they reflect the optimal decision points given the prior probability of disease, the sensitivity and specificity of available tests, and the relative harms of different types of error. The model is normative—it prescribes when rational action is warranted based on objective probabilistic and utilitarian considerations.

5.2 Where the BST Intervenes

The Belief Stabilization Threshold addresses a fundamentally different phenomenon: not what rational thresholds prescribe, but the cognitive process through which clinicians come to believe that such thresholds have been reached. The BST is not a normative construct but a descriptive one. It characterizes the psychological moment at which a clinician’s subjective confidence in a diagnosis becomes sufficient for action—irrespective of whether that confidence is objectively calibrated to the evidence.

The two frameworks therefore operate at complementary levels of analysis. The Pauker–Kassirer model describes the decision-theoretic structure of rational clinical action. The BST describes the cognitive and psychological processes through which clinicians actually arrive at the beliefs that trigger those actions. Together, they illuminate both what clinicians should do and why they so often do something different.

5.3 The Gap Between Thresholds

This complementary relationship reveals a clinically significant gap. Premature BST crossing occurs precisely when a clinician’s subjective belief stabilizes at a probability level below the objective treatment threshold—when felt confidence outpaces the warranted confidence the evidence supports. The clinician experiences certainty; the evidence justifies only probability. Treatment is initiated; the Pauker–Kassirer model would prescribe further investigation.

Conversely, the BST framework also illuminates cases in which the treatment threshold has objectively been reached but belief has not yet stabilized—where excessive epistemic caution delays necessary action. In both directions, the distance between the BST and the objective decision threshold is a measure of epistemic miscalibration.

Table 1. Comparative Framework: The Pauker–Kassirer Model and the Belief Stabilization Threshold

| Dimension | Pauker–Kassirer Model | Belief Stabilization Threshold |

|---|---|---|

| Nature | Normative — prescribes when rational action is warranted | Descriptive — characterizes when belief psychologically stabilizes |

| Domain | Decision theory and expected utility | Cognitive psychology and epistemology |

| Threshold type | Testing threshold and treatment threshold | Belief stabilization threshold |

| Key question | At what probability should we act? | At what point does the clinician believe the threshold has been reached? |

| Error explained | Misapplied utilities or probability estimates | Premature cognitive closure before the objective threshold is met |

Table 1. The Pauker–Kassirer threshold model and the BST operate at complementary levels: the former is normative and decision-theoretic; the latter is descriptive and cognitive. Their integration offers a fuller account of diagnostic reasoning and its failures.

6. Dual-Process Cognition and the BST

The cognitive mechanisms underlying the belief stabilization threshold can be usefully analyzed through the lens of dual-process theory, which distinguishes between two broad modes of cognition that operate in parallel and interact in complex ways throughout clinical reasoning.

System 1 processes are fast, automatic, and associative. They enable the rapid pattern recognition that underlies much of expert clinical judgment—the experienced clinician who recognizes a presentation ‘at a glance’ or who senses that something is wrong before being able to articulate why. These processes are genuinely powerful and account for a substantial proportion of accurate diagnoses in experienced practitioners. They are, however, poorly equipped to handle novelty, to detect their own errors, or to revise established patterns in response to conflicting evidence.

System 2 processes are slow, deliberate, and rule-governed. They enable systematic analysis, explicit hypothesis testing, and reflective evaluation of reasoning. They are capable of overriding System 1 conclusions when engaged—but they are also cognitively costly, and they are readily suppressed by time pressure, cognitive load, and emotional factors.

The BST represents, in cognitive terms, the moment at which System 2 reasoning shifts from challenging a System 1 hypothesis to justifying it. Prior to the BST, analytical reasoning interrogates the leading hypothesis, testing it against alternatives, evaluating disconfirming evidence, and identifying gaps in the explanatory account. After the BST is crossed, the same analytical resources are recruited in service of elaborating and defending a conclusion that has already been reached.

This shift from interrogation to justification is not always inappropriate; there are clinical situations in which rapid commitment is both necessary and correct. The danger arises when the shift occurs before sufficient analytical scrutiny has been applied—when System 1 pattern recognition drives early BST crossing and System 2 is enlisted to rationalize rather than to evaluate.

7. Bayesian Interpretation of the BST

Within a Bayesian framework, diagnostic reasoning is modeled as a process of continuous probabilistic updating. As new evidence is encountered, prior probabilities are revised according to the likelihood ratio of the evidence, producing posterior probabilities that reflect the updated state of belief. This model captures something important about the structure of diagnostic inference—namely, that it is inherently probabilistic and that each piece of evidence should, in principle, shift the probability distribution across competing hypotheses.

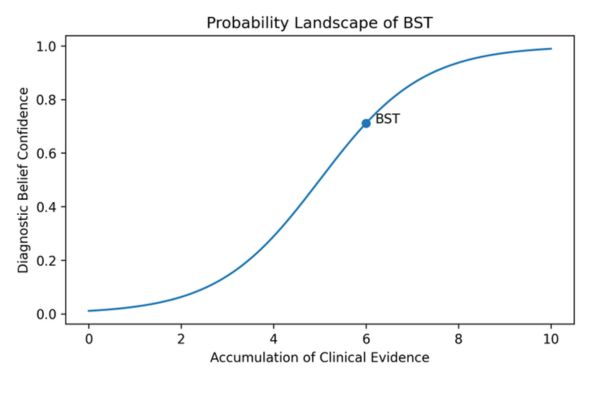

The Belief Stabilization Threshold can be interpreted, within this framework, as the subjective probability level at which a clinician considers a hypothesis sufficiently credible to guide clinical action. Formally, BST crossing occurs when the posterior probability of the leading hypothesis crosses the clinician’s action threshold—a value that is not fixed but varies according to context, perceived risk, and the costs of different types of error.

This Bayesian framing clarifies an important distinction: the BST is not equivalent to a Bayesian posterior probability. It is a psychological threshold—a felt sense of sufficient confidence—that may or may not accurately track the mathematical probability of the hypothesis. When the BST is reached at a probability level that is genuinely action-appropriate, premature stabilization is avoided. When it is reached at a lower probability level—driven by cognitive fluency, emotional relief, or environmental pressure rather than by the weight of evidence—premature stabilization occurs, and the conditions for diagnostic error are established.

This discrepancy between felt confidence and calibrated probability is, in essence, the core cognitive mechanism the BST framework seeks to illuminate.

8. The Epistemic Ecology of Clinical Belief Formation

Clinical reasoning does not occur in an epistemic vacuum. It is embedded within an epistemic ecology composed of cognitive processes, institutional structures, interpersonal dynamics, and technological systems that collectively shape how diagnostic beliefs emerge and stabilize. Understanding the BST requires attending to this ecology, not merely to the individual clinician’s cognition.

8.1 Cognitive Determinants

At the individual cognitive level, BST timing is influenced by the strength and availability of recognized disease patterns, the clinician’s familiarity with the presenting syndrome, and the cognitive fluency with which a coherent diagnostic narrative can be constructed. Presentations that fit readily into familiar templates tend to trigger earlier BST crossing, irrespective of whether that fit is diagnostically appropriate.

8.2 Emotional Determinants

Diagnostic uncertainty is psychologically uncomfortable. The resolution of uncertainty through diagnostic commitment produces a genuine sense of relief—an affective state that functions as an implicit reward, reinforcing the tendency to seek and accept closure. The desire to reduce uncertainty is not merely a cognitive preference; it is an emotional pressure that can drive BST crossing independent of evidential adequacy.

8.3 Environmental and Institutional Determinants

Busy clinical environments, time pressure, high patient volume, and institutional workflow structures that prioritize efficiency over deliberation all create conditions in which premature BST crossing becomes more likely. When the structural conditions for reflective reasoning are absent, clinicians inevitably rely more heavily on rapid intuitive processes—and the BST is crossed earlier, on thinner evidence.

8.4 Social and Hierarchical Determinants

Clinical belief does not form in isolation. Agreement among colleagues, deference to hierarchical authority, and the emergence of team consensus can powerfully stabilize diagnostic beliefs and suppress further inquiry. Once a senior clinician has named a diagnosis, the social cost of reopening the diagnostic space may be perceived as prohibitive, even when the evidence warrants it.

9. Clinical Vignette

The following vignette illustrates the BST in operation within a plausible clinical scenario.

A 58-year-old man presents to the emergency department with central chest discomfort, mild nausea, and mild diaphoresis. He has a well-documented history of gastroesophageal reflux disease and reports that his symptoms began shortly after a large meal. The presenting team, aware of his prior history, rapidly forms a working diagnosis of reflux-related chest pain. The narrative is coherent, the pattern is familiar, and the explanation fits without apparent strain. The BST is crossed early.

The patient is administered antacids and observed. Over the subsequent hours, several features that are atypical for reflux disease emerge: the pain does not fully respond to antacids, the diaphoresis persists, and an electrocardiogram obtained at triage shows subtle ST-segment changes that are noted in the record but not prominently flagged. Because the diagnostic framework has already stabilized, each of these observations is processed through the lens of the established narrative: the antacid response is judged ‘partial,’ the diaphoresis is attributed to anxiety, and the ECG changes are deemed ‘non-specific.’

Figure 2. Probability Landscape of Diagnostic Belief Stabilization

Illustrating how competing hypotheses shift in probability weight over the clinical encounter and where premature versus appropriate BST crossing occurs relative to evidence accumulation.

The patient is discharged with a diagnosis of dyspepsia. The patient returns eight hours later in complete heart block with cardiogenic shock. The ECG confirms an acute inferior myocardial infarction.

This vignette illustrates how premature BST crossing—driven by the availability of a coherent alternative narrative, pattern familiarity, and the implicit pressure of emergency department efficiency—can render subsequent evidence invisible. The disconfirming information was present; it was the interpretive framework that failed to register it.

10. Implications for Medical Education

If the belief stabilization threshold is a structural feature of clinical reasoning rather than a correctable individual deficiency, the educational response must be correspondingly structural. Teaching clinicians to recognize belief stabilization dynamics in real time requires more than the transmission of information about cognitive bias; it requires the cultivation of epistemic habits—durable dispositions of mind that resist premature closure and sustain productive uncertainty.

Diagnostic time-outs, implemented as deliberate pauses in the reasoning process during complex or high-stakes cases, create structural opportunities for System 2 reasoning to re-engage. The explicit question—’Before we commit to this diagnosis, what have we not yet adequately explained? ‘—operationalizes the BST framework in practical terms.

Case-based reflective learning, particularly post-hoc analysis of diagnostic errors and near-misses, can help clinicians develop metacognitive awareness of their own BST tendencies. Understanding that premature stabilization is a reproducible cognitive phenomenon—not a personal failing—may reduce the defensiveness that often accompanies diagnostic error discussion and make genuine learning more accessible.

Counterfactual reasoning exercises—in which clinicians are asked to argue the strongest possible case for a diagnosis other than their leading hypothesis—directly challenge the confirmatory orientation that characterizes post-BST reasoning. By making the alternative explicit and compelling, these exercises lower the practical cost of reconsidering a committed diagnostic position.

Finally, fostering a clinical culture in which diagnostic humility is modeled by senior clinicians and in which the revision of a diagnosis is treated as evidence of intellectual integrity rather than professional failure may be among the most powerful and durable educational interventions available.

11. Artificial Intelligence and the BST

Artificial intelligence tools are increasingly integrated into diagnostic workflows, and their implications for the BST merit careful analysis. These systems offer probabilistic predictions derived from large datasets, often with speed and consistency that exceed individual clinician capacity. The potential benefits are genuine and substantial.

However, the BST framework draws attention to a risk that is insufficiently appreciated in current discussions of AI-assisted medicine: algorithmic outputs may accelerate rather than mitigate belief stabilization. When clinicians receive an AI-generated diagnosis—particularly one presented with high stated confidence—the BST may be crossed almost instantaneously, before the clinician has meaningfully engaged with the clinical evidence through independent reasoning. The AI output becomes an anchor so powerful that subsequent evidence processing is organized around confirming it rather than evaluating it.

This risk is compounded by a structural feature of many current AI systems: their opacity. Unlike a consultant colleague whose reasoning can be questioned and challenged, an AI system typically offers a conclusion without accessible reasoning. The clinician cannot evaluate the quality of the inference; they can only accept or reject the output. This opacity undermines the epistemic practices—critical interrogation, identification of disconfirming evidence, reflective evaluation of the reasoning process—that structured skepticism is designed to protect.

The BST framework therefore emphasizes that AI tools should be integrated into clinical reasoning as probabilistic inputs to human judgment, not as replacements for it. Human epistemic oversight—the active, critical engagement of a reasoning clinician with both the evidence and the algorithmic output—remains non-negotiable. An AI system that generates an accurate diagnosis but bypasses human reasoning offers a short-term benefit that may exact a long-term cost: the atrophy of the very reasoning capacities that are needed when the system fails.

12. Conclusion

Diagnosis represents not the discovery of disease but the stabilization of belief within a field of irreducible uncertainty. Every diagnostic commitment is an epistemic act—a reasoned judgment to act as though a particular account of the patient’s condition is sufficiently true, made in full knowledge that certainty is unavailable and that revision remains possible.

The Belief Stabilization Threshold provides a conceptual framework for understanding the cognitive moment at which clinical reasoning transitions from active exploration to operative commitment. When the BST is crossed at an evidentially appropriate point—after adequate hypothesis generation, genuine evaluation of alternatives, and calibrated probabilistic updating—it represents a rational and necessary feature of clinical decision-making. When it is crossed prematurely, driven by cognitive shortcuts, emotional pressures, or environmental constraints, it renders clinical reasoning structurally vulnerable to the propagation of diagnostic error.

Structured skepticism—understood not as a posture of doubt but as a disciplined practice of maintaining epistemic openness through the active interrogation of diagnostic assumptions—offers a practical mechanism for regulating the BST. Its goal is not the elimination of diagnostic commitment, which is neither possible nor desirable, but the cultivation of a clinical mind that reaches commitment at the right moment: on the basis of adequate evidence, after genuine consideration of alternatives, and with a preserved capacity for revision when the evidence demands it.

The aspiration of the BST framework is ultimately a clinical culture in which diagnostic humility and diagnostic decisiveness are understood not as opposites but as complementary virtues—both essential to the epistemically responsible practice of medicine.

References

- Croskerry P. The importance of cognitive errors in diagnosis and strategies to minimize them. Academic Medicine. 2003;78(8):775–780.

- Djulbegovic B, Hozo I, Schwartz A, McMasters KM. Acceptable regret in medical decision making. Medical Hypotheses. 2015;85(1):89–95.

- Epstein RM. Mindful practice in medicine. JAMA. 1999;282(9):833–839.

- Gigerenzer G. Risk Savvy: How to Make Good Decisions. New York: Viking; 2014.

- Graber ML. The incidence of diagnostic error in medicine. BMJ Quality & Safety. 2013;22(Suppl 2):ii21–ii27.

- Kahneman D. Thinking, Fast and Slow. New York: Farrar, Straus and Giroux; 2011.

- Norman G, Eva K. Diagnostic error and clinical reasoning. Medical Education. 2010;44(1):94–100.

- Pauker SG, Kassirer JP. The threshold approach to clinical decision making. New England Journal of Medicine. 1980;302(20):1109–1117.

- Shinde AG. Skepticism as a meta-regulatory tool in clinical reasoning: Toward a logic of medical knowing. SSRN Electronic Journal. 2026.

- Tonelli MR. The philosophical limits of evidence-based medicine. Academic Medicine. 1998;73(12):1234–1240.

Leave a Reply